Agentic Shift Into Management Role

Introduction

Some time ago my grandma called me and worriedly asked “Are you being replaced by AI? News on the TV showed some reports that employees of IT are being fired”. I calmed her down and said that I’m fine - nothing to worry about. The AI buzz shows no signs of slowing down, and it impacts everyone - including our families. That conversation made me stop and think: how is AI actually changing my work?

In this article, I’ll share my insights and examples on how agentic AI is slowly but surely changing the way we work.

The blurry line between individual contributor and manager

Throughout my career, I’ve been in both individual contributor (engineer) and manager (project lead) roles. Right now I’m enjoying being an individual contributor, but who knows - maybe one day I’ll go back to more management-oriented work.

I can vividly remember the joys of being a manager:

- Defining high-level vision and constraints;

- Clarifying requirements from stakeholders;

- Formulating and refining tasks for the team to pick up;

- Reviewing completed work, either providing feedback or accepting it;

If you take a close look at each of these tasks, can you spot associations with the way we work using agentic AI?

- Defining vision and constraints -> Preparing context for agents;

- Refining tasks -> Refining prompts;

- Reviewing work -> Reviewing outcome produced by agent;

- Providing feedback -> Sending follow-up prompts;

By adopting agentic AI, we are slowly but surely transitioning into more management-oriented roles. We’re no longer sole individual contributors - we’re managers of a small but powerful agentic team. That transition requires not only situational awareness, but also new skills to handle this new role effectively.

Agent Manager 101

I’m definitely not going to teach you how to become a great manager in one article. But managing agents is significantly easier than managing humans (unless one day agents come to you demanding a salary raise). Here are a few things that have worked for me.

Garbage in, garbage out

I’ve seen people complain that AI is stupid and fails to perform the tasks it was given. In those situations, I like to flip the question: how did you actually define what AI needs to do?

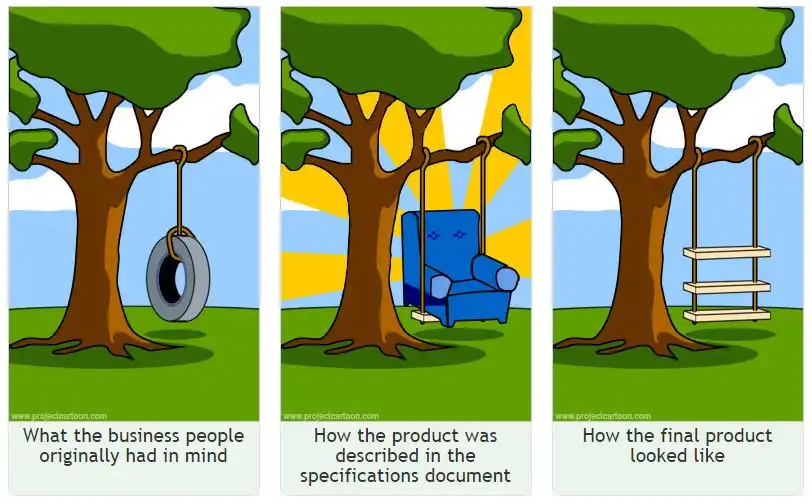

Let’s imagine we have a junior peer instead of an AI agent. If you give them a vaguely defined task like “implement the payments flow in our platform”, what outcome would you expect? Being an inexperienced engineer, that person would most likely be too shy to admit they have no clue where to begin. They’d say “OK, will do” and start scrambling. Eventually you’d get some result, but it probably wouldn’t be what you expected.

The same goes for agentic AI. You need to clearly define the requirements and exactly what needs to be delivered. It’s pretty ironic that we often blame product people for failing to define requirements clearly, while we struggle to give clear instructions to AI. I guess it’s karma.

Requirements engineering is a delicate art. It takes specific skills and knowledge that you’ll need to develop to successfully direct AI. A few tips:

- Use acceptance criteria that can be verified by you or another agent

- Split larger tasks into smaller ones

- Instruct AI agents to ask clarifying questions

- Ask the agent to prepare a plan, review it, provide feedback, and only then allow it to proceed with delivery

Key principle: The quality of an answer depends on the quality of the question.

Context is everything

Prompt engineering is dead. Long live context engineering! Without going too deep into theory, context engineering is prompt engineering on steroids - you provide not just the question, but also the data needed to produce a good answer.

Let’s say you ask an agent to refactor your application. It gets to work, and once it’s done you review the result only to find the agent completely restructured your app: introduced new patterns, added random third-party dependencies, and more. You didn’t ask for any of that, and now you’re frustrated.

From the agent’s standpoint, it did refactor the code. But you didn’t specify how the goal should be achieved, so that’s on you. This is why we need to give agents as much context as possible when assigning a task. Just like you’d explain overall architecture, constraints, and expectations to a junior peer, you need to do the same for an agent.

Luckily, agentic tooling lets us reuse and codify that knowledge. For example, you can have a CLAUDE.md file in your project root directory where you put cross-cutting knowledge that gets loaded into every Claude Code session.

My recommendation is to always invest time in preparing such files. Not only do agents benefit from them, but you also get living documentation that agents can update and new engineers can read.

Key principle: Give agents as much context related to the task as possible to set them up for success.

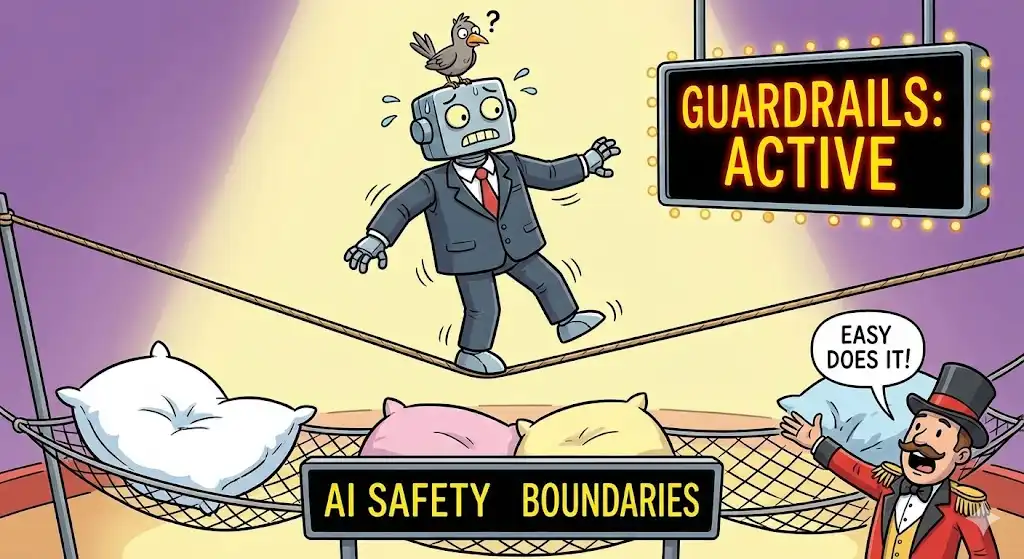

Guardrails

Imagine another situation: the agent successfully completes the work, implements the feature, and it all works as expected - great! But when you check in the code and run the CI pipeline, linting complains and tests are failing. Oops.

Just as you wouldn’t want to nitpick your junior peer’s PR with formatting comments, you want agentic AI to comply with the quality standards you’ve set. Automated quality verification is essential for providing a fast feedback loop so an agent can adapt its solution until it passes quality gates.

Codifying code style using tools like ESLint or Spotless allows:

- The agent to verify whether its output passes the rules. If checks fail, that failure becomes input for the next improvement cycle;

- An automated pipeline to verify that agent-produced output is acceptable;

- You to spend less time on code formatting, knowing it will be caught automatically;

Additionally, a good test suite means you can be more confident that the agent accounts for all codified scenarios and avoids introducing breaking changes. If you adopt behavior-driven development, you can write the scenarios yourself and let the agent implement against them - using your tests as guardrails.

Key principle: Automated verification saves time and effort while ensuring the expected quality of output.

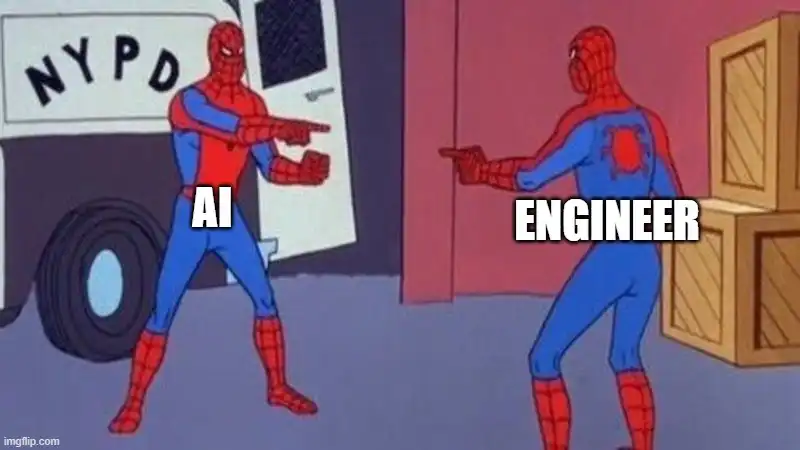

Extreme Ownership

Too often we see low-quality work produced with AI. No wonder “AI slop” has become a common phrase. By adopting the manager mindset, you take ownership of everything your team produces — including its quality. If a peer produced low-quality work, would you simply accept it or push back? The same mindset applies when reviewing code produced by agents.

Personally, I review all changes proposed by agents and critically check whether everything makes sense. If something slips through, “oh, it’s the AI that made this mistake” is not an excuse. AI might have made the mistake, but it’s you who approved the PR - so the responsibility is shared.

Key principle: You are ultimately responsible for the outputs produced by AI.

Delegation

Last but not least, being a manager is an ultimate test of your delegation skills. Managers have a lot of work thrown at them, but that’s why they have teams. Delegation is a hard skill to develop - I learned that the hard way when I was a first-time project lead. More on that experience in this article - sorry, Lithuanian only: Konstantinas Jurgilas. Kaip naujam vadovui nepaklysti tarp darbų.

It might be tempting to do everything yourself - but the opposite trap exists too. You might want to offload every task to AI. That’s possible, but you need to learn to delegate effectively. It ties back to defining clear requirements, giving context, and setting guardrails. Ultimately, you’re the one who defines the goal and vision. You decide which features get built today, what the acceptable test coverage is, and when to tackle that technical debt. You need to decide what gets delegated to agents and what stays with you.

Key principle: Effective delegation and your role as a visionary are crucial for overall success.

Summary

AI is definitely changing the way we work. Am I worried about it? Not really. Our industry is always evolving. We used to talk about a new JavaScript framework every week - now we talk about new LLM models. It’s interesting to develop new skills, adapt to changing roles, and find new ways of working.

As long as we stay open to change and embrace the need to step up and adapt, I can reassure my grandma: nothing to worry about.

Yet?… 😁